MySQL with AI Inside

Most AI integrations with a database follow the same pattern: your application fetches rows, ships them to an AI API, waits for a response, then writes the result back. It works, but it means adding a service layer to do something that's conceptually simple—apply a function to data. If you want to classify every product description in a table, you write a loop. If you want to run it on inserts, you write a background job.

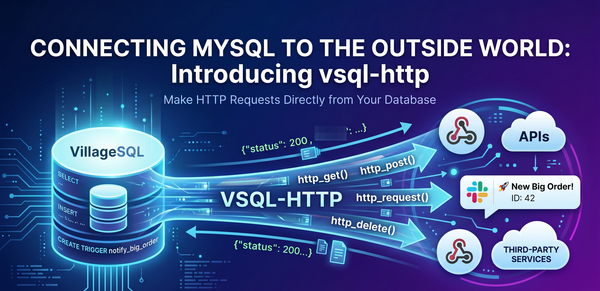

The VillageSQL vsql-ai extension cuts out the middle tier by adding AI functions directly to MySQL. You can call Claude, Gemini, GPT, or a local Ollama model using the same SQL syntax you'd use for UPPER() or JSON_VALUE().

The extension gives you two functions: AI_PROMPT() for text generation and AI_EMBEDDING() for vector representations. Both take a provider, a model name, an API key, and the input text. The supported providers are Anthropic, Google, OpenAI, and local Ollama—so if you want to keep everything on-premises, you can.

A basic prompt looks like this:

SET @api_key = 'your-anthropic-api-key';

SELECT AI_PROMPT(

'anthropic',

'claude-sonnet-4-6',

@api_key,

'Summarize this in one sentence: MySQL is an open-source relational database...'

) AS summary;

That returns the model's plain-text response as a string. You can pipe it anywhere MySQL accepts a string—store it in a column, use it in a CONCAT, or filter on it.

This is useful for running prompts across entire tables. Suppose you have a reviews table with free-text feedback and you want a sentiment column. Instead of exporting the data and writing a script, you can do it in one pass:

SET @api_key = 'your-api-key';

UPDATE reviews

SET sentiment = AI_PROMPT(

'anthropic',

'claude-sonnet-4-6',

@api_key,

CONCAT(

'Classify the sentiment of this review as POSITIVE, NEGATIVE, or NEUTRAL. ',

'Reply with exactly one word. Review: ',

content

)

)

WHERE sentiment IS NULL;

The model gets called once per row. No loop, no script, no application code to maintain. Embeddings work the same way. AI_EMBEDDING() returns a JSON array of floats you can store in a JSON column for similarity lookups:

SET @api_key = 'your-google-api-key';

CREATE TABLE documents (

id INT PRIMARY KEY,

content TEXT,

embedding JSON

);

INSERT INTO documents (id, content, embedding)

VALUES (

1,

'VillageSQL is a drop-in replacement for MySQL with extensions.',

CONVERT(AI_EMBEDDING('google', 'gemini-embedding-001', @api_key,

'VillageSQL is a drop-in replacement for MySQL with extensions.') USING utf8mb4)

);

The embedding vector lands in the table alongside the source text. From there you can compute cosine similarity in SQL or use it to power search ranking inside a stored procedure. Native vector storage is coming to VillageSQL. AI_EMBEDDING() is how you generate the vectors that will feed it.

The most immediate use is automated classification on inserts. Here's a trigger that calls an AI model every time a support ticket is created and writes a priority label back to the row:

CREATE TRIGGER classify_ticket

BEFORE INSERT ON support_tickets

FOR EACH ROW

BEGIN

SET @api_key = (SELECT api_key FROM api_keys WHERE provider = 'anthropic' LIMIT 1);

SET NEW.priority = AI_PROMPT(

'anthropic',

'claude-haiku-4-5-20251001',

@api_key,

CONCAT(

'Classify this support ticket as LOW, MEDIUM, or HIGH priority. ',

'Reply with exactly one word. Ticket: ',

NEW.description

)

);

END;

This example uses Claude’s Haiku model rather than Sonnet because it's faster and cheaper for classification tasks. Additionally, the INSERT doesn't complete until the trigger does, so latency matters. You can store your API key in a dedicated table with restricted grants, not inline in the trigger body. A key hardcoded in a trigger definition shows up in SHOW CREATE TRIGGER output which is a security risk.

AI API calls can a lot slower than a local SQL operation. In our testing on a local llama3.2 model, a 20-row sentiment classification ran in about 4 seconds, roughly 0.2 seconds per row. Embeddings via nomic-embed-text ran at about 0.7 seconds per row. This is slower than generation, because the embedding model is doing more numeric work per call. Cloud models will vary depending on network and load. You'll want to set a session timeout before running bulk updates:

SET SESSION max_execution_time = 60000; -- 60 seconds

For large tables, process them in batches (with a LIMIT clause and a loop) rather than running a single UPDATE against millions of rows. Rate limits also apply. Anthropic and Google both cap requests per minute, and the error messages from AI_PROMPT() will tell you when you hit them.

If you'd rather not send data to a cloud API at all, the local provider connects to an Ollama instance running on 127.0.0.1:11434. No API key nor an external network call are required:

SELECT AI_PROMPT('local', 'llama3.2', '', 'What is 2+2?');

You can pull any model you want in Ollama and it's available in SQL.

Get Started

We built vsql-ai for the cases where the simplest path is just calling the function (no data pipeline, no service layer, no event queue). It won't replace a dedicated ML infrastructure for high-volume workloads, but for classification jobs, text enrichment, embedding generation, and lightweight inference it means less code and fewer moving parts.

The source, installation instructions, and full function documentation at vsql-ai on GitHub.

Get started with VillageSQL at villagesql.com